So sitemap is basically an xml file listing the pages and content of a website. You can read more about robots.txt rules from. We will add the sitemap in the next step. The last line tells crawlers where to find the the sitemap for the website (make sure to replace the with your own domain). Two first lines tell crawlers that the entire site can be crawled. Robots.txt file needs to be available in the website root like this So all we need to do is to create a new file named robots.txt to the /public folder.Īdd the following inside the robots.txt file. A sitemap tells Google which pages and files you think are important in your site, and also provides valuable information about these files: for example, for pages, when the page was last updated, how often the page is changed, and any alternate language versions of a page. Search engines like Google read this file to more intelligently crawl your site. SitemapĪ sitemap is a file where you provide information about the pages, videos, and other files on your site, and the relationships between them. This is used mainly to avoid overloading your site with requests it is not a mechanism for keeping a web page out of Google.

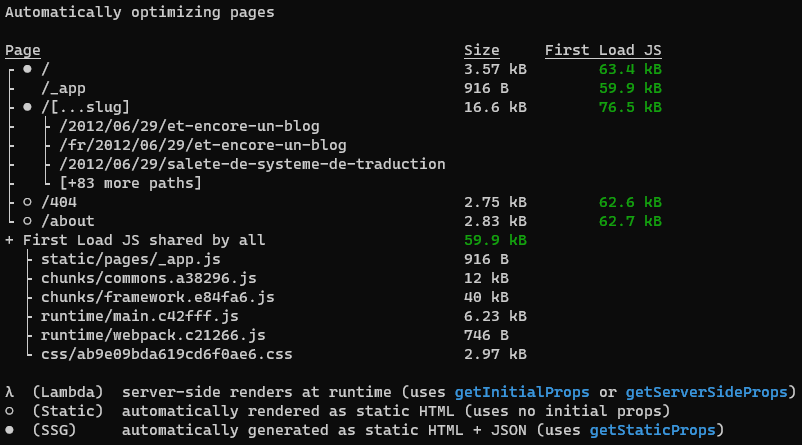

Ok so if sitemap and robots.txt are important for my website SEO, what are and what do they actually do? Robots.txtĪ robots.txt file tells search engine crawlers which pages or files the crawler can or can't request from your site. In this article I will explain what sitemap and robots.txt are and show you how to add them to your own Next.js application. Adding a sitemap and robots.txt are two major ones. There are bunch of things you can do to improve your website SEO. In order for my blog posts to be properly discovered by Google, I needed to make my site SEO friendly. Read more: How I converted my website from Wordpress to Jamstack I recently built my own blog with Next.js and I wanted to make sure that my blog posts would be discovered properly by Google (and other search engines, but I'll just say Google because let's be honest, who Bings?). This is especially true for blogs because you want to have your blog posts shown by search engines. In production first run npm run build then run pm2 start npm - start.It's important for any website to have good search engine optimization (SEO) in order to be discovered and visible in search engines such as Google. Update your package.json file with the code below. Here is the complete steps and code to implement XML Sitemap in next js Create server.js in the root of your project with the following code const express = require('express') Ĭonst bodyParser = require('body-parser') Ĭonst dev = _ENV != 'production'

You can submit this url to google as your sitemap. Now you can visit /sitemap.xml and see your sitemap. Server.get('/sitemap.xml', (req, res) => res.status(200).sendFile('sitemap.xml', sitemapOptions)) Then whenever your app gets a request to the url /sitemap.xml serve that sitemap.xml file that you generated online. In your server.js put the following code:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed