I considered outputting it at 6k, but the reality is that most people don't even have a 4k monitor, much less a 6k monitor, at 1080p, it should give us a realistic idea of the benefit to an end user in encoding to bit depths higher than 8-bit.Īs a side note, for $2500, this camera is one hell of a value, 13 stops, capable of recording to RAW or ProRes, full 6k resolution, if I were running a streaming site, with unique content, I would author and stream in 5k as a way of differentiating my site from competitors. When you load this in Handbrake, it automatically crops it 448 top and bottom to 6144x2560 and I outputted it as 1920x1080 storage resolution, 2592x1080 2.40:1 display resolution.

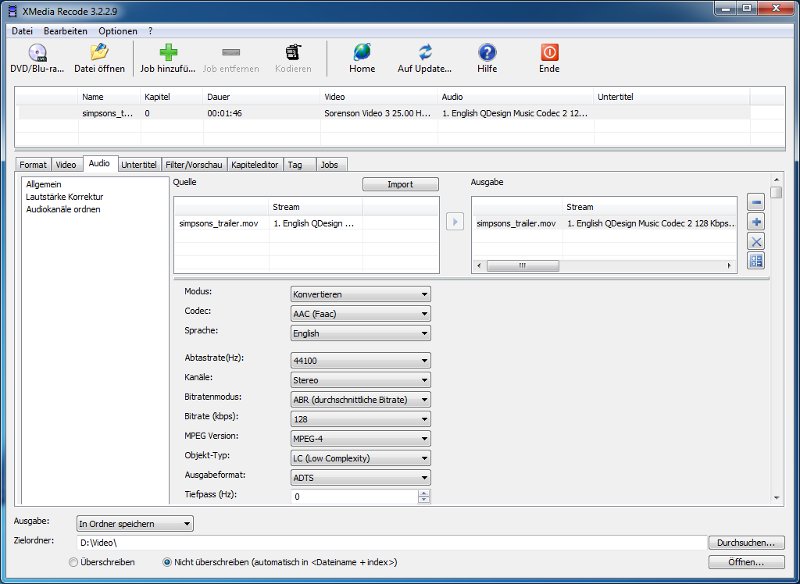

The source is ProRes, 1034 Mb/s, 6144x3456, 24fps, 422 and I used the latest build of Handbrake on Manjaro, with all the updates. So I decided to perform a test, using the source named BMPCC6K_Wedding.mov, from here: But most monitors only support 8-bit, some support a pseudo 10-bit output, and as far as I know there are no consumer tv's that currently support 10-bit output.įurther compounding my skepticism is that some, if not all, non-pro encoding front ends, such as handbrake, despite being able to output a 10-bit or 10/12-bit video (in the case of x265), the internal processing is still done in 420 8-bit. In order to display a true 10-bit image, you need a video card, drivers and monitor that support true 10-bit output. One of the objections revolved around the source I proposed for his test and his claims that since some of the encoders to be tested support 10-bit encoding and some don't, that it would unfairly penalize the 8-bit encoders by using a 422 10-bit source.ĭespite mocking him, I spent some time thinking about this and no matter with way I look at it, I can't escape one very simple reality, it probably doesn't matter whether or not you encode to 8-bit or 10-bit because the final encode will be viewed in nearly all cases on an 8-bit monitor, which means that the 10-bit encoding needs to somehow be mapped to an 8-bit display. However, a recent discussion with a sparing partner on this forum led me to start rethinking this position, especially as i watched him tap dance around the offer he made and I accepted only for him to back out of with silly objections. This seems like a reasonably conclusion, and in fact I admit I am guilty of offering this advice in the past. The generally accepted answer is that even with an 8-bit source there are benefits to encoding to 10-bit because, the argument goes, of smoother color gradients due to higher precision math. This question has been asked, and in theory, answered numerous times, with the topic usually popping up as it related to x264 vs x264 10-bit.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed